Our Tag: Nemotron-3 Collection

Explore all our latest insights, tutorials, and announcements on AI workflow and tech.

Sovereign AI with Nemotron: Protecting Your IP in the Age of Open Weights

The Security of the "Open" LabelA major headline in March 2026 AI News is NVIDIA’s commitment to "Open Weights" for the Nemotron-3-Super family. This is a game-changer for businesses that value Intellectual Property (IP). By running Nemotron-3-Super locally through Ollama or on-premise NVIDIA NIMs, you ensure your trade secrets never leave your firewall. At Scalexa, we call this "Sovereign Intelligence." It removes the psychological fear of your data being used to train someone else''s model. We help you deploy these models in "Trusted Execution Environments," turning your AI from a potential leak into a private fortress of data. In a world where data is the new oil, Scalexa ensures you are the only one with the keys to the refinery. By choosing a sovereign, local model, you are telling your clients that their privacy is your highest technical priority. Scalexa is your partner in building an ethical, secure, and private AI future. Model Mastery: Solving the Context Explosion [interlink(149)] and Nemotron vs. Llama 3.3 [interlink(150)].

Agentic Reasoning: Using Nemotron-3-Super to Solve the "Context Explosion"

Mastering the 1-Million Token WindowAs AI News reports, the defining challenge of 2026 is "Context Explosion"—the massive amount of data generated when multiple AI agents collaborate. NVIDIA’s Nemotron-3-Super solves this with a staggering 1-million-token context window. At Scalexa, we’ve found that this eliminates the "Memory Drift" that usually happens in long business conversations. Imagine an AI that can read 1,500 pages of technical documentation and still remember the very first instruction you gave it. This creates a level of psychological "Continuity Trust" that was previously impossible. Scalexa leverages this long-context mastery to build complex support and research agents that don''t just guess; they know the full history of your project. We don''t just give you a tool; we give you a system with a perfect memory. Scalexa is your architect for a future where your AI never forgets the details that matter most. Model Mastery: Solving the Context Explosion [interlink(149)] and Nemotron vs. Llama 3.3 [interlink(150)].

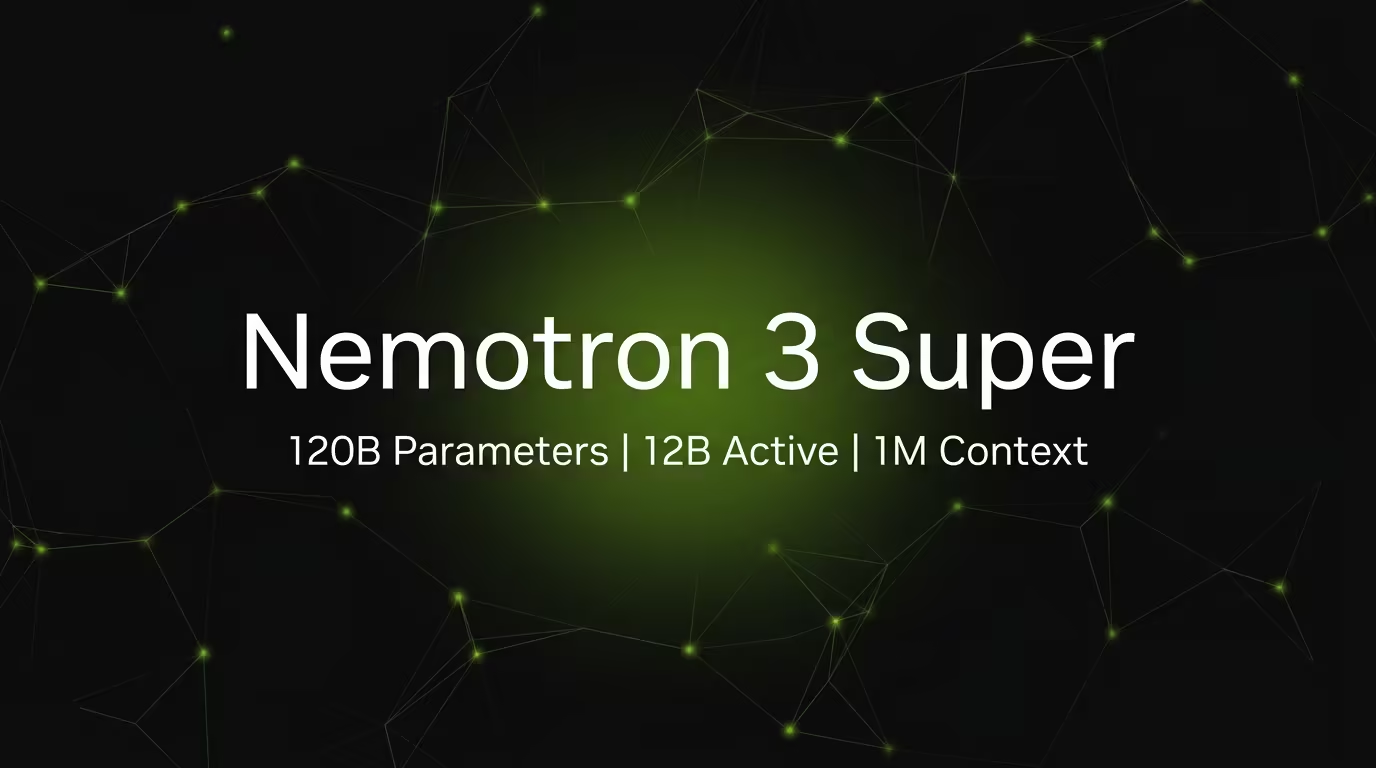

The Nemotron-3-Super 120B: Why NVIDIA Just Changed the Local AI Game

The Efficiency of "Active" IntelligenceIn the most recent AI News for March 2026, NVIDIA has unveiled the Nemotron-3-Super, a massive 120B parameter model that psychologically reframes how we think about "heavy" AI. Despite its size, it uses a Mixture-of-Experts (MoE) architecture that only activates 12B parameters during inference. At Scalexa, we’ve observed that this "Latent MoE" design allows businesses to run enterprise-grade reasoning locally with 5x higher throughput than previous models. This isn''t just a technical spec; it''s a psychological breakthrough for CEOs who want the power of a giant model without the sluggish latency. By running Nemotron-3-Super via Ollama, you gain a private, high-speed "digital brain" that remains entirely within your control. Scalexa helps you bridge the gap between cloud-level intelligence and local-speed execution, ensuring your automated workflows are as responsive as they are smart. By running Nemotron-3-Super via Ollama, you gain a private, high-speed "digital brain" entirely within your control. [interlink(151)] By running Nemotron-3-Super via Ollama, you gain a private, high-speed "digital brain" entirely within your control. [interlink(151)] Compare Engines: Nemotron vs Llama 3.3: [interlink(150)] or solve the Context Explosion: [interlink(149)].